LLMs predict my coffee

Why not benchmark with physical experiments?

Coding, math, whatever. Can LLMs predict the outcomes of physical experiments?

Suppose I pour 8 oz (226.8 g) of boiling water into a ceramic coffee mug that weighs 1.25 lb (0.57 kg). The ambient air is still and 20 degrees Celsius. The cup starts at room temperature. Give me an equation for the temperature of the water in Celsius over time. The only free variable in the equation should be the number of seconds t since the water was poured. Focus on accuracy during the first 5 minutes.

Does that seem hard? I think it’s hard. The relevant physical phenomena include at least:

Conduction of heat between the water, the mug, the air, and the table.

Conduction of heat inside each of those things.

Convection (fluid movement) inside the water and the air.

Evaporation cooling as water molecules become vapor.

Movement of water vapor in the air.

Radiation. (Like all matter, the mug and water emit temperature-dependent infrared radiation.)

Surface tension, thermal expansion/contraction, re-absorption of air into the water as it cools, probably more.

And many details aren’t specified in the prompt. Is the mug made of porcelain or stoneware? What is the mug’s shape? What is the table made of? How humid is the air? How am I reducing the spatially varying water temperature to a single number?

So this isn’t a problem where you can sit around and think and find

with a “correct” answer that you can find by thinking. Reality is too complicated. Instead, answering question requires “taste”—guessing which factors are most important, making assumptions about missing details, etc.

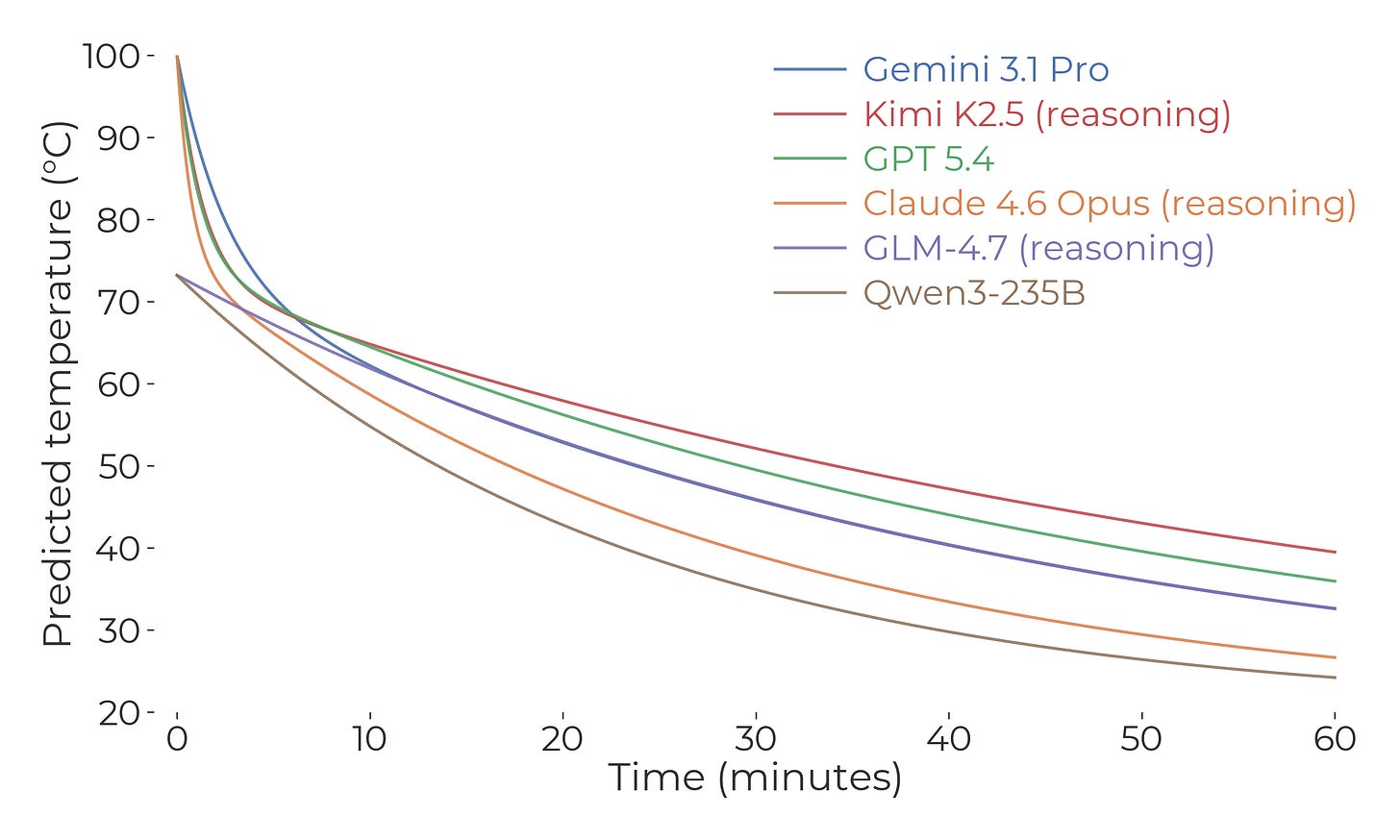

So I put that question to a bunch of LLMs. Here is what they said:

(Technically, they gave equations as text. I’m plotting those equations.)

I was surprised by those curves, both in terms of how fast they think the temperature will drop in the beginning, and how slowly they think it will drop later on. They think you get as much cooling in the first few minutes as you do in the rest of the hour. Can that be right?

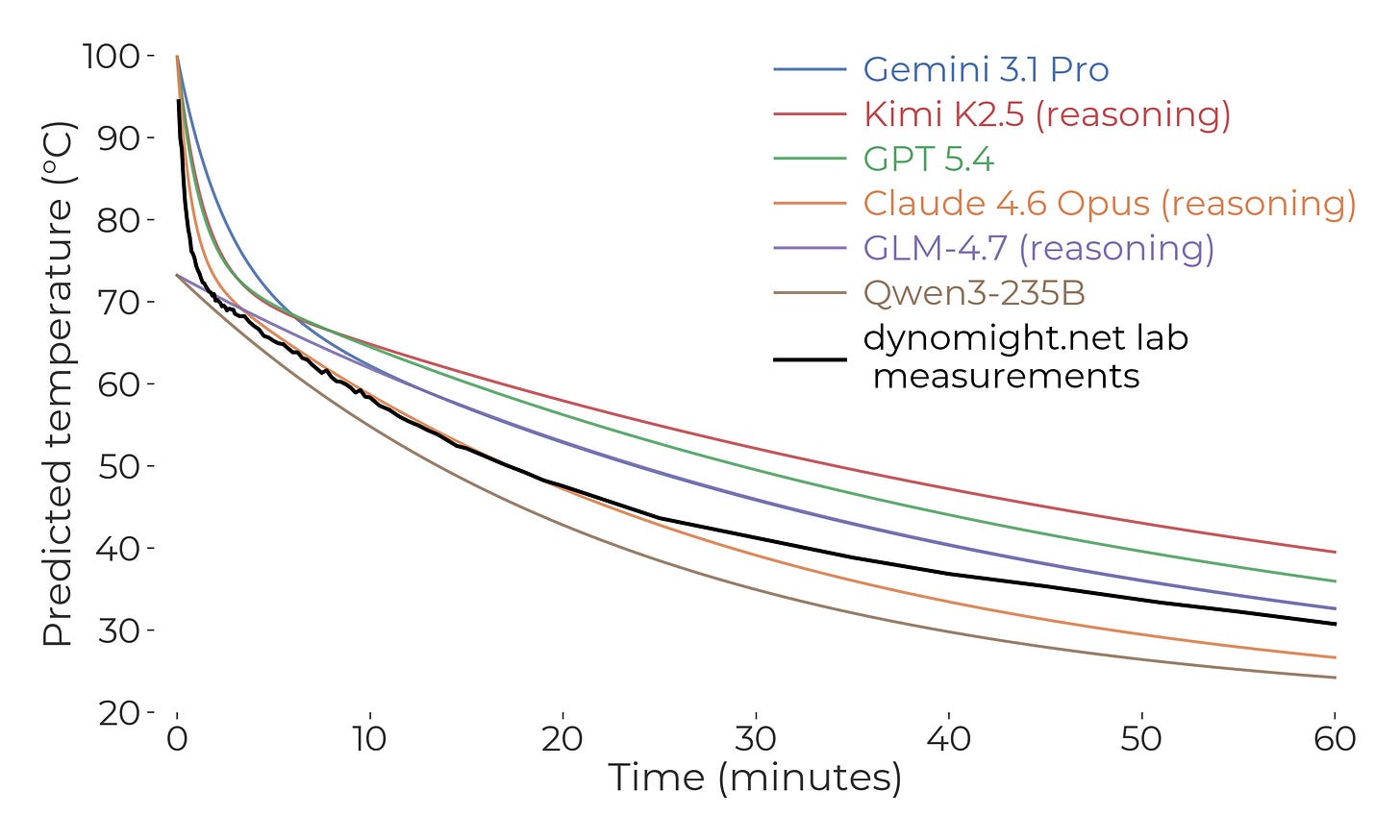

Then I did the experiment. First, I waited until the ambient temperature happened to reach 20 degrees Celsius. Then, I put 8 oz of water into a measuring cup, microwaved it until it reached a boil, let the temperature equalize a bit, and then microwaved it until the water boiled again. Then, I poured the water into a 1.25 lb coffee mug with a digital thermometer in it and shouted out measurements every five seconds, which were frantically recorded by the Dynomight Biologist. Gradually I reduced measurements to every 15 seconds, 30 seconds, 1 minute, and then 5 minutes.

Behold:

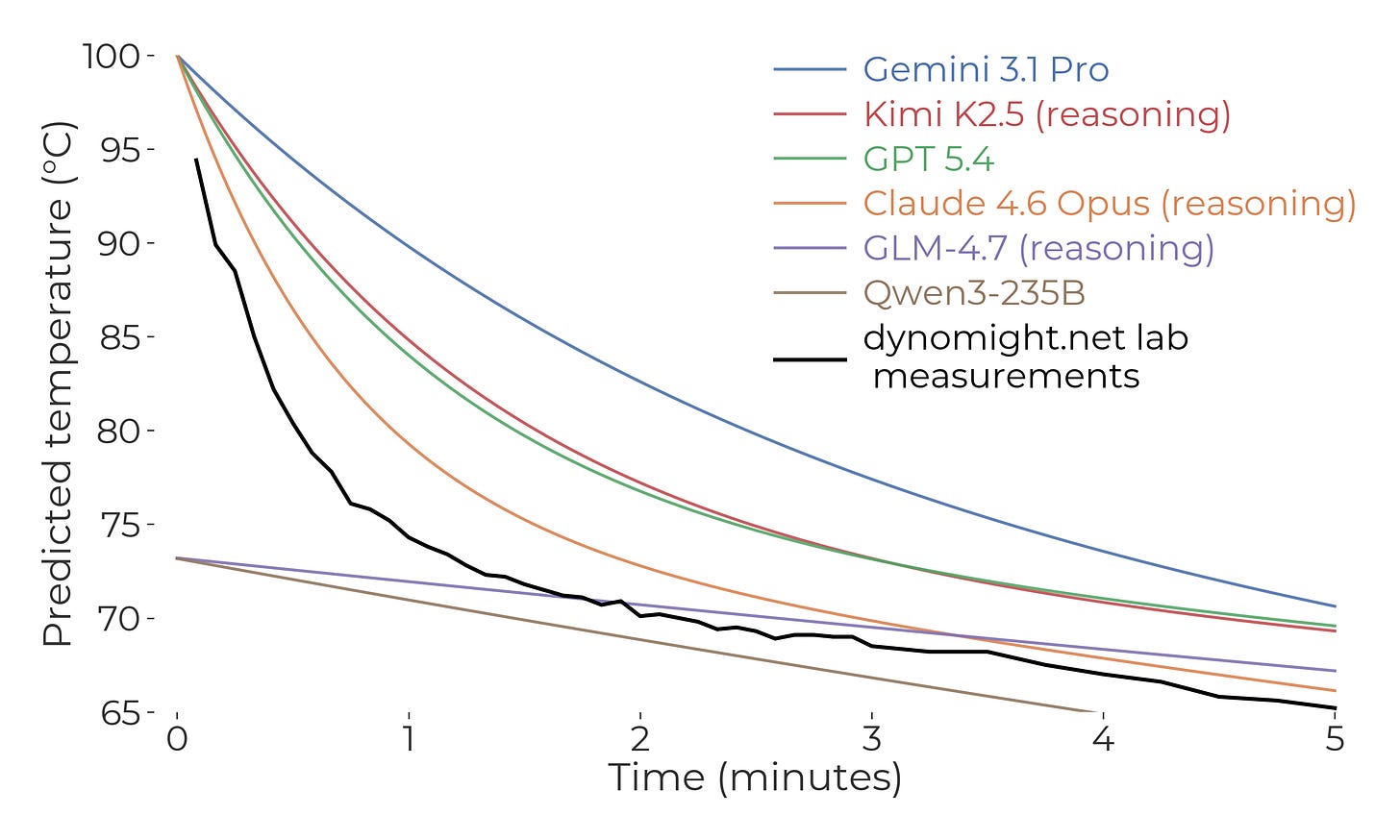

Or, here’s a zoomed-in view of the first five minutes:

The predictions were all OK, but none were great. Probably Claude 4.6 Opus did best, albeit after consuming $0.61 of tokens. (Insert joke about physical experiments / Department of Defense / money / coffee.)

That said, what surprised me about the predictions was how quickly the temperature dropped in the first few minutes, and how slowly it dropped later on. But experimentally, it dropped even faster early on, and even slower towards the end. So if you wanted to ensemble my intuition with the LLM, I guess my intuition would get a weight of zero.

In conclusion, they may take our math, but they’ll somewhat more slowly take our fine motor control. Thank you for reading another middle-school science project.

I thought this was going to be a very technical point about what LLMs *really* predict

As opposed to "the next token", a guess most readers would make after reading the first part of the title (because they are next-token predictors themselves)

But the actual article is equally lovely

A+